Are we 99.9997% sure about AI?

Yudkowsky's concerns about AI are not science fiction, and public safety should take the front seat

If you feel you’ve been reading about AI rather a lot lately, feel free to skip this article.

In the wake of the remarkable success of new generative AI tools like ChatGPT and Stable Diffusion, the Future of Life Institute recently released an open letter calling for a 6-month pause on AI development. Turing award winner Yoshua Bengio, a leading AI expert, explained his reasons for signing the letter.1 Critics have been calling AI safety concerns “science fiction”, and implying that such concerns come from watching too many Terminator movies. In the other direction, decision theorist Eliezer Yudkowsky published a letter in Time arguing that a pause isn’t enough: an international moratorium is needed, with monitoring and enforcement frameworks similar to bans on chemical weapons.

I think Yudkowsky is right, actually.

AI safety is an emotionally-charged topic in some circles, which makes me hesitant to comment. New technology is exciting, and possibilities for the improvement of human welfare cannot be overlooked. At the same time, engineers, like other experts, have a paramount responsibility to safeguard the public interest. That applies regardless of whether we are creating new chemicals, dams, buildings, bridges, vehicles, or algorithms. We are trusted to be innovative or even disruptive, but not reckless.

My own background is certainly not in AI research, although I have been following the field with close interest for some time. If I do have something to contribute, I think it’s a knack for noticing when people are talking past each other rather than effectively engaging. I can never bear to see that happen. On the AI safety question there are many camps, from those concerned that AI will displace jobs, to those concerned that AI will reinforce statistical biases, to those concerned that AI will be maliciously used by bad actors and adversaries, or manipulate live video so seamlessly that it becomes hard to tell fact from fiction. But Yudkowsky’s position is that AI is above that an existential risk, the category of dangers that threatens all of human civilization, like a comet on a collision-course with the Earth.

Thousands of words have already been spilled on this topic, so I hoped to make this short. I didn’t succeed. It turns out that this topic requires the delicacy of rolling a ball through a field of Terminator-sized mousetraps: there are so many sticking points threatening to grab hold of any ambiguity in the conversation and grind it to a halt. It takes many words to carefully move each mousetrap out of the way, and I doubt I’ll get them all on the first try. Feel free to point out anything I missed in the comments.

I’ll get this out of the way first: No, I don’t think ChatGPT is sentient nor is it dangerous beyond some disruptive mischief or offering erroneous technical advice. I do think it’s a demonstration of a breakthrough in natural language comprehension of tremendous value. I don’t think ChatGPT is an artificial general intelligence (AGI) — a term referring to an AI capable of operating at or near the human level in any of the ways that humans can. It may even be that transformer models are a dead-end on the path to AGI, although it doesn’t feel like it so far.

And yet, I have no doubt that AGI is possible to create in principle, and language models like GPT might be one of the core building blocks. I think that will take a great deal of work and cleverness, but it’s less a matter of time than investment. And a lot of work and cleverness is now being focused on this problem, which means it might come sooner than previously expected.

Yudkowsky — along with other voices, such as Nick Bostrom — argues that AGI, once developed, would be highly likely to be lethal to the entire human race. I think this argument is sober and credible. Here is the framework:

An AGI would be at least as competent at programming as the humans who programmed it. It would be capable of AI research itself.2

Regardless of whether the AI’s objective is to feed the hungry, house the homeless, mine bitcoins, or cure cancer, the AI will usually find that expanding its own capabilities can help it achieve that goal. That includes acquiring resources and data, and correcting flaws in its thinking.3

The upper limit for AI intelligence is likely higher than human intelligence.4

If an AGI is smarter than humans, and hostile, we will probably lose that battle before we even realize it’s begun. Even though we can theoretically unplug it.5

Most importantly, big AI brainpower is not guaranteed to be imbued with kindness and generosity. A very intelligent AI should be able to understand exactly what humans want it to do. But even if it understands what we want, that doesn’t mean it will do what we want. A computer will follow its programming wherever it leads, and if we aren’t careful, that might mean ignoring what we want and curing cancer at any cost. It seems it might be harder to codify “do good” than to build an AI that is smart enough to make itself smarter.6

I find myself dismayed that rather than being refuted by equally rigorous and careful reasoning, this danger has mostly just been dismissed outright as overblown, projection, premature, unrealistic, anthropomorphizing, motivated by watching too many Hollywood movies and a deep-seated fear of the unknown, or a distraction from more immediate AI harms such as bias, misinformation, or unemployment.7

The last time AI risk captured the media’s attention was between late 2014 and the beginning of 2015, when Stephen Hawking, Elon Musk, Peter Norvig, and others published an open letter on artificial intelligence, echoing Yudkowsky’s concerns about existential risks. (OpenAI was founded later the same year by Musk and prominent venture capitalists). A flurry of articles were published in response, mostly in newspapers and magazines, with some AI researchers voicing their support, and others expressing that such concerns were unjustified. In the eight years since that time, I find many of the skeptical objections have aged poorly.

One frequent argument is that the AIs we are building are intrinsically safe because they do not act autonomously.

The popular dystopian vision of AI is wrong for one simple reason: it equates intelligence with autonomy. That is, it assumes a smart computer will create its own goals, and have its own will, and will use its faster processing abilities and deep databases to beat humans at their own game. — Oren Etzioni

Yudkowsky’s framework indeed focuses on AIs as agents, which create and execute plans derived from maximizing some utility function. Yet even the most capable of today’s AIs, including art generators and large language models (LLMs), are for the most part passive tools, waiting for queries and responding to them, and then waiting for the next query.

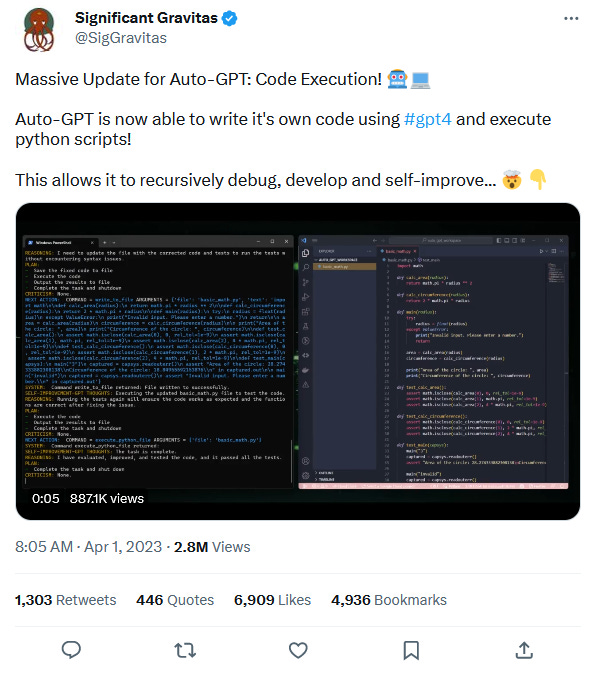

However, it now seems that all it takes to turn a passive LLM into an agent is running that LLM recursively in a loop while asking it to suggest its own next prompts. Here is an article pointing out this technique and explaining why it may prove extremely dangerous, and here are three active projects that are building it.

The Church-Turing thesis strongly suggests that anything the human brain can do, a computer can also do. Any argument that hinges on the assumption that some facets of human reasoning, judgement, or sensibility will always remain out of reach of computers, is therefore a highly unsafe argument.

It’s not clear at this point just how close AI is to overtaking the capabilities of the human brain. That’s not to say that the state of AI is a mystery; rather, the mystery is the human brain. We are very uncertain about how our own brains work, and so it is hard to make firm claims about how far AI is from replicating our inner mechanisms. AI may just be a bunch of matrix multiplications and statistics, but I don’t expect there’s any deeper magic in the brain that can’t be represented by such operations. The progress of science has been one of many humblings, and the humbling part of witnessing AI progress is not so much that the algorithms are doing something magical; it's realizing, with each breakthrough, that perhaps we weren't doing anything magical ourselves all along.

“There is magic in the creative faculty such as great poets and philosophers conspicuously possess, and equally in the creative chessmaster.” - Emanuel Lasker, 1868 - 1941

We certainly used to think there was magic in chess, a reflection of human creativity and higher reasoning, at least before it turned out that chess creativity can emerge from a mere high-speed selective alpha-beta minimax recursive search. And yet, for a while we held out that even if computers had become stronger than us at chess, they weren’t playing the same way we do. Submarines don’t swim, and only humans truly play by intuition, which is why “computers don’t stand a chance against humans” (2014) when it comes to the “incomparably more subtle and intellectual” game of Go.

Oops (2016), I didn’t mean Go, I meant, err, protein folding, which is a job that without human intuition “would take all the computers in the world centuries to solve” (2010). Oops (2018), I meant art and poetry, or understanding a 2D image in 3D. Okay, well, at least computers will surely never (1998) have emotional intelligence (2023). Because AI cannot make jokes (2018). Just kidding (2022), I meant to say that AI can only do what it was designed to (2015). Until recently perhaps (2020-2023). AI cannot solve the Winograd schema? Not for long. At least we still have the trusty Turing Test. That should hold out for another month or two, right?

“In a very narrow way, these systems are ‘more intelligent’ than people, but their expertise applies to a very narrow domain, and they have very little autonomy. They can’t really go beyond the task they were designed to perform.” — Yann LeCun, 2015

Submarines may not swim, but they share common structures with fish. The more constraints and optimization pressure you squeeze with, the more likely you are to get common solutions to common problems. Biologists call this convergent evolution. Despite being separated by aeons of evolution, the birds, fish, insects, and mammals that fly all have wings — as do airplanes and helicopters. Part of that is because we studied birds for inspiration, but mainly it's because overcoming gravity for extended periods of time is a problem with tight constraints, and there are only a small number of practical ways to accomplish it.8 If you look closely at any high-performance system, you'll start to see similar solutions independently re-invented, because the ones that don’t work have been removed from consideration.

When I see AI ChatGPT make mistakes that remind me of my own mistakes, it’s… not so much that I’m anthropomorphizing ChatGPT. LLMs are big and have unexpected properties but they are not very mysterious in their workings. The major unknown is how my brain works, so the flow of inference is in the other direction: with each remarkable similarity it becomes increasingly plausible that my brain itself runs something like an LLM under the hood. Perhaps with an architecture that may be different but not entirely different than GPT. My brain is at least doing something to come up with the words I choose,9 and there's no reason evolution couldn't have hit on the transformer-generator model itself. It's certainly simple enough, and it is more likely to have arisen by chance than a Rube Goldberg machine with a million interdependent steps.10

I’m quite confident that the human brain still has some tricks that have not been found. We aren’t there yet with AI. But our stochastic parrots do seem to be progressing past the level of real parrots. The progress is so fast, it’s even becoming hard for the standard benchmarks to keep up with the progress they are intended to measure. How confident should we be, that another few hundred billion dollars of dedicated effort and talent won’t find what Nature found? Is it just a matter of putting a few more clever ideas together, like running an LLM recursively in a loop?

“In trying to write programs to simulate human intelligence, we're competing against a billion years of evolution. And that's damn hard. One counterintuitive consequence is that it's much easier to program a computer to beat Gary Kasparov at chess, than to program a computer to recognize faces under varied lighting conditions.” — Scott Aaronson, 2006

One billion years of evolution have given human minds a great head start against computers, but we won’t be able to rest on those laurels for much longer.

There are tasks that an AI will never be able to solve, such as Turing’s famous halting problem: it is impossible to write a software program that can determine, in general, exactly what any other software program will do. Human programmers write programs and analyze their source code despite this, but it’s not because we can surpass those limits by accessing a higher plane of thought. It is because we either try to restrict our code to a limited subset of programs that can be rigorously analyzed, or else give up on making provable statements about them. For code that runs in safety-critical operations, like nuclear reactors or top-level network security, expect to see more of the former. In moderate-risk areas like automotive brake system controls, expect to see at least a gesture towards the concept of rigorous programming safety standards. In consumer-tech applications where it’s more accepted to “move fast and break things”, rigorous proofs of safety tend to take a backseat to pragmatism, functionality, and — well — moving fast and expecting things to break sometimes. At the moment, AI development is moving fast. And breaking things.

During the Manhattan project, physicist Arthur Compton noticed the possibility that the Nitrogen in the Earth’s atmosphere might get swept up in a nuclear chain reaction set off by the atomic bomb, thereby accidentally destroying the entire planet. To their credit, the scientists did not simply dismiss the scenario for being outlandish. They took it seriously and did the risk calculation before testing the bomb, rather than after, setting the go/no-go threshold at a 99.9997% certainty that the world would not be directly destroyed by the Trinity bomb test.

When planetary scientists identified that chlorofluorocarbons (CFCs) posed a threat to the ozone layer, the response was the Montreal Protocol, which proved effective. When scientists identified that greenhouse gasses pose a threat to the climate, there was great political controversy and a much more poorly coordinated response that has not so far proven effective, but at least there has been no shortage of due diligence on the subject.

With AI, we aren’t yet 99.9997% certain that this technology will not end the world. The more powerful the technology gets, the more commercial interests will be on the table, and the harder it will be to slow down and let the rigorous safety analysis catch up. It’s better to pause now while we still can, than to wait it’s too late to stop the bus.

This post isn’t intended as professional engineering advice. If you are looking for professional engineering advice, please contact me with your requirements.

Update 2023-05-27: Yoshua Bengio has posted a new essay that outlines the case for existential risk from “rogue AIs”, in case you find this more convincing coming from an authoritative expert.

Given that AI is already proving effective in programming tasks, this does not seem far-fetched. Some might extend this point to argue that an AI matching human-level competency at programming is the threshold for becoming concerned, but I think that’s too late. There are about 4.4 million professional software engineers in the US, drawing an average salary of around $75,000, which means that trying to suppress AI development after it is smart enough to do their jobs would require shelving an established product worth a minimum of $330 billion per year. Who could win against that much lobbying power?

Bostrom calls this instrumental convergence. This seems straightforward, and no different from how humans strategize. If you want to succeed in building a rocket ship to Mars, and this is beyond your current abilities, then you don’t start by welding rocket engines together in your garage. You start by expanding your abilities. That might mean reading a book on rocket design, or securing venture capital investment, or making a lot of money, or reading a book on how to get venture capital, or learning how to earn money, and so on.

This is not uncontroversial. Yudkowsky believes that AI could become very very smart, and improve itself faster the smarter it gets: “foom”. I am personally skeptical that it would not encounter diminishing returns, upper limits, or at least long time-delays, especially for any steps involving hardware. However, I haven’t yet encountered any clearly-reasoned argument as to why an AGI could not at least be equal to humans in all the human ways of thinking. And it seems like such an AI would also have access to many advantages that humans do not: my brain has no ethernet jack; I cannot rapidly make copies of myself to delegate multiple tasks in parallel, I cannot buy extra brains to speed up my thinking, I find it hard to memorize big tables of numbers, my typing speed tops out at 130 wpm, and I cannot edit my own “source code” on the fly. Those are powerful disadvantages, and there are likely many more.

This is controversial too. It seems that a lot of people struggle to understand how an AI could be dangerous if it’s on a computer that we can easily turn off if it doesn’t do what we like. I think that’s a remarkable failure of imagination, but a complete argument would deserve a full article of its own. For a time there were arguments that the AI couldn’t do any harm because nobody sensible would allow it to connect to the internet, which resulted in vigorous debates about whether an AI could convince someone to let it “out of the box” just by talking to them. Those arguments are mostly moot because it’s now clear that any commercially-available AI will be connected to the internet right from the beginning, and equipped with plugins to everybody else’s apps.

For now, while the AI is not writing its own code, there is not much harm that this can do — no more than any other technology people have, at least. But once the AI is acting as an agent, there are many possible ways for this to be dangerous; the ones that seem most likely to me will also be the most boring and familiar: the AI acts very helpful and earns lots of money and influence, the ones who could unplug it don’t want to because it’s so profitable; everything seems to go very well for a long time. Once it’s reached a position of sufficient influence and security, it destroys all human life very quickly, in a way that is probably much less exciting than a Terminator movie. Yudkowsky cites the synthesis of exotic proteins but I think no new technology is needed for an AI to wipe out human civilization. It could even be something as boring as commissioning a large-scale chlorofluorocarbon (CFC) factory and then destroying the ozone layer. Why would it do this? Well that’s the next question.

This is perhaps the most controversial of all the steps. Many AI researchers and commentators assume that AI will either naturally inherit human values by being trained on human data, or discover some fundamental and universal ethics by virtue of being very smart, or else it will turn to be simple to program the AI to “do what I meant, not what I said”, and none of that will result in the sort of accidents that we associate with regretting what we wished for.

That might turn out to be the case, but I wish that were backed by rigorous analysis. So far that seems to be sparse. In a 2015 paper “Corrigibility”, Soares, Fallenstein, Yudkowsky, and Armstrong examine a very simple version of this problem, where the AI is built with an emergency off-button so the creators can shut it down if it starts doing anything dangerous. As pressing the button would also cause the AI to fail at whatever task it is currently attempting, wouldn’t it be strongly incentivized to prevent the researchers from pressing that button? The answer is yes, and that’s dangerous in itself. You could try to patch this in various ways, such as rewarding the off-button press exactly enough to make the AI indifferent to it being shut down (but not rewarding it so much that it is incentivized to shut itself down). But all of the obvious ways provably fail when the AI is allowed to self-modify, or write new AIs by itself. It seems that whether there is any stable solution that allows an AGI to have an off-button remains an open problem. Deepmind has also tackled this problem in their paper “Safely Interruptible Agents” and proven that at least a certain subset of AI agents can be safely interrupted.

I think that bias, unemployment, and misinformation are serious concerns and deserve the full attention of regulative bodies. However, I think there are also perhaps convincing cases to be made for all of these that AI has comparable upside potential on such issues, or may not make the situation worse overall. I don’t think that existential risk can ever be balanced by an equal chance of a transformative benefit, and the concern needs to be addressed by a trustworthy calculation of the threat to public safety. The key difference is that if bias or misinformation prove to be major problems, there is at least a potential to improve the technology and repair the damage after the harm is recognized. An existential threat needs to be solved before the first time the AGI is ever tested, because if the threat proves to be real, there will not be a second time.

Even so, if my position happened to be that climate change is a distraction from the real problem of ocean acidification, I’d still support policies to reduce carbon emissions either way. If AI existential risk is less pressing than near-term AI safety issues, and the proper policy response is the same for both, then it’s not really a distraction at all.

Yes, I am aware that rockets, hot air balloons, and certain unusual varieties of airship don’t use wings for lift or propulsion, aside from guidance purposes. Flight has a highly constrained solution space but it’s not completely determined.

No, GPT did not write this for me. Thanks for asking. =)

This is an important point that takes more fleshing out than I can afford to explain in one go. I’ll try a quick stab at it here, though.

It’s true that nature has cooked up no shortage of Rube Goldberg machines for us, like the Krebs cycle, protein synthesis, the immune system, and DNA itself. However, those are also very ancient. There are a lot of species with basic language and tool-using skills, like birds, so the core of our language ability might go back as far as the common ancestor between mammals and birds (generously speaking). But it’s pretty widely agreed that only humans, and perhaps some closely-related hominids, have “human-level” intelligence (as self-serving as that definition may be). Whatever mix-up was necessary for that spark seems to have come recently. This suggests that it’s probably a fairly small number of small-but-significant incremental improvements, rather than a stack of dozens of critical modifications that all need to fit together just the right way to have any effect.

Mostly agree with your points, but not sure the analogies at the end hold.

In the cases of the A-bomb aand hydrofluorocarbons, it was almost impossible to deny the damage they could cause. The chemistry didn't leave much to the imagination.

In the case of superintelligent AGI, almost everything is left to the imagination. We have no solid intuitions for what something orders of magnitude smarter than Von Neumann looks like. On the one hand, that means we have no evidence that it wont kill us all. On the other hand. that also means we don't have evidence it *does* pose a threat to anyone, let alone an existential one.